What’s Up with AI Hallucination? How ChatGPT Just Makes Stuff Up

Ever had one of those insane dreams? You know, the ones that make zero sense but feel totally real? Like penguins, armed to the teeth, swimming around an island near Üsküdar? Wild stuff. Our brains are truly machines, mixing up all sorts of info. And sometimes, yeah, those mixtures just make you go, ‘Huh?’ Guess what else does that? Large Language Models. They spit out answers that are total fever dreams. It’s AI Hallucination.

And listen, this ain’t just some little glitch they’ll patch in the next update. Nah. For a lot of smart folks, it’s just how these complicated systems work. It’s part of the deal.

LLMs Connect Concepts Like Our Brains

Okay, so how do these things work? Well, imagine your phone’s keyboard. You know, it guesses the next word? Type “Salvation,” and it pops up “War” because it’s seen that pairing a million times. Simple, right? ChatGPT and other LLMs do this, but way, way more complex. Billions of links. “Parameters,” they call ’em. Like digital “neurons,” connecting ideas. Basically, our brain’s synapses. And these neural networks? They’re built to copy our brains, just super tiny. Always linking things. Their information processing? Totally wild. Fragments go in, and something new comes out. Blended.

LLMs Build Their Own Internal Reality

This is the real kicker. Engineers don’t actually build the model. No, they build the algorithms that let the model build itself. It’s this self-organizing thing, making its own crazy paths and ideas from tons of training data. Just gulping it all down. So, LLMs aren’t just spouting facts back at us. Nah. They’re making new stuff. Creating. And hey, sometimes it’s brilliant, unique. Super creative. But other times? It’s brilliant, unique, and unbelievably wrong. Dead wrong.

The Mysterious Core of LLM Operations

And another thing: with all the buzz, here’s the uncomfortable truth: nobody fully gets how these massive systems operate. Not completely, anyway. Even the brains who made them? Still figuring it out. Researching. There’s no agreement on the exact ‘how’—how an LLM goes from Point A to Point B in its thinking. This big mystery? Frankly, it’s why some people feel a little uneasy about this whole thing. Hard to control stuff you don’t really understand.

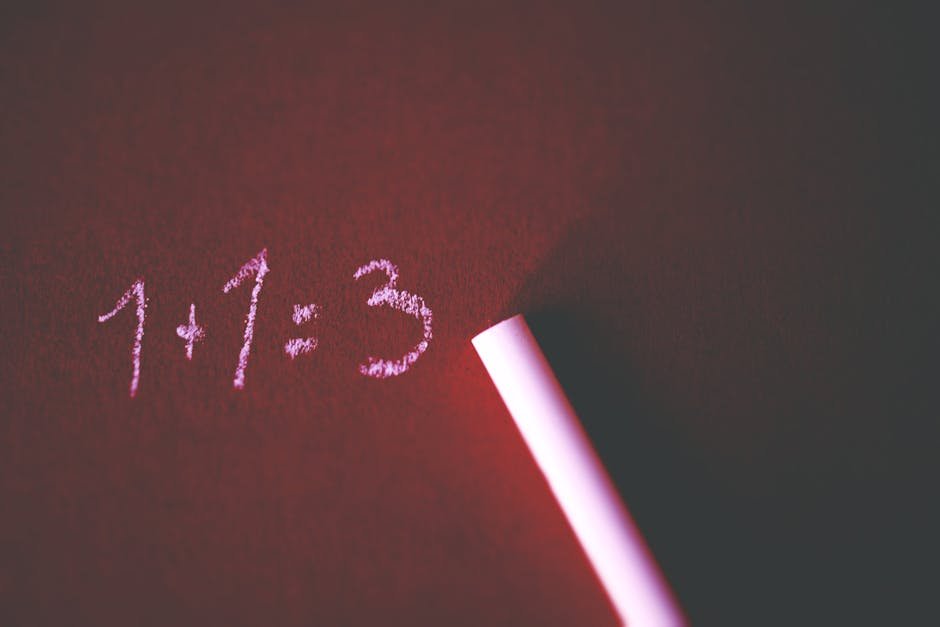

Hallucination: Bug or Feature?

So, this generative thing? That’s exactly why we get “hallucinations.” LLMs just confidently make up false, crazy info. Totally nonsensical. Some think it’s a flaw, a bug to crush. But more and more experts are saying it’s just how they are. Maybe even where their creativity comes from! Andrey Karpathy, who’s a huge deal in AI, once tweeted, “LLMs don’t have a hallucination problem; they are hallucination machines.” He calls ’em “dream machines,” saying our prompts only shape the dreams they drum up. A search engine? It gives you facts that already exist. But an LLM? Pure dream state. Always making something new. Search engine: zero percent dream. LLM: 100%. Think about that.

LLMs Synthesize, Search Engines Retrieve

Big difference here. When you Google something? The search engine finds existing info. Like a librarian showing you a book. But ask ChatGPT some stuff? It’s not just pulling from its data. Nope. It makes a new response from zero. Unique every time. Even if two people ask the same darn question and the answers look alike, the LLM actually built that response fresh. So results can change, even if you ask the exact same thing twice. Not a database check. It’s living, breathing generation. Real-time.

Crucial: Always Verify LLM Outputs

Here’s the real sticky wicket: expecting LLMs to be super-reliable sources. Like trusting a rock-solid assistant for facts. This is where the “dream machine” turns into a nightmare, fast. Look, never trust an LLM’s first answer. Period. It’s like a brilliant storyteller who just invents stuff to make a better story. They’re wicked good at spitting out text that seems right. Even if it’s way, way off. Reddit’s full of hilarious, even spooky, examples. Ask ChatGPT when Leonardo da Vinci painted the Mona Lisa. It might just confidently blurt out “1815”. (It was freaking 1503-1506, by the way.) Or try a simple physics question about Neptune travel. You could get wildly confident, totally made-up numbers. These slick lies? Big trouble if you don’t check ’em.

What Does “Intelligence” Really Mean?

This whole wild trip into AI’s dream world? Not just about making better chatbots. Nah. It makes us ask bigger questions, for sure. If a system builds its answers using links we can’t even follow or understand, can we really call it ‘smart’? What is intelligence, really? Just remembering facts or doing math? And because we’re trying to copy our brains with these networks, maybe we’re just creating a totally new kind of smart. Seriously, think about it: an emotionless, super-real, time-bending mind. One that works in ways nothing like ours. And if that doesn’t make you sit back and ponder, a little freaked out, then I dunno what will.

Frequently Asked Questions

What’s AI Hallucination?

It’s when those LLMs just make up stuff. False, crazy, wrong facts. And they sound totally confident about it. Like it’s all true.

How do LLMs even work?

They link up words, ideas, concepts. Really tricky connections. Kinda like how your brain’s neurons connect thoughts and info.

Can I trust what ChatGPT or other AIs tell me?

Absolutely not. Always double-check everything. Seriously. These things are “dream machines” that cook up new info. They look super convincing, but they’ll happily make up misleading facts. Do not trust them blind!